Big Brother is Liking Your Post

The Intersection of Data Colonialism and Social Media Intelligence

Abstract

Due to profit incentives offered by targeted marketing, social media platforms have created an environment of data disclosure through which law enforcement can use public social media posts to track down and arrest suspects. In this paper, I identify the economy that encourages platforms to augment tools of data disclosure, the connection of this environment to law enforcement, and, through case studies, demonstrate the power offered by this new law enforcement tactic. Because the root of this issue is in private social media platforms, I argue that these companies must be regulated ahead of regulating law enforcement.

Figures

Figure 1

Author: Adam Simpson

Introduction

Lilly Simon was taking the subway home in 2022 when a stranger filmed her, zooming in on bumps on Simon’s skin. It was the peak of an monkeypox (mpox) outbreak in New York City; an emoji of a monkey and a question mark was all the poster needed to insinuate that Simon had mpox. Lilly Simon did not have mpox. Lilly Simon has neurofibromatosis type 1, a genetic condition that causes tumors to grow along her nerves. The post received comments mocking Simon for being out in public. Some even threatened her life.

This behavior, filming a stranger in public, is a rather common occurrence, and has gone under many names. One trend on TikTok calls the people being filmed “NPCs,” meaning “non-player character,” a video-game term. NPCs are not real people, only collections of behaviors and dialogue responses. On social media, users will film someone acting out of place or awkwardly in public, and mark the subject as an NPC. Doing so is a means of deeming that person’s behavior as strange. The behavior of such so-called NPCs range from someone simply standing idly, to what is called a "public freakout.” “Public freakout” refers to someone getting upset for any reason in public, though videos of so-called freakouts tend to carry with them the stance that the subject does not have a right to be upset. Filming people in public goes beyond just these TikTok trends. Reddit, a social media platform made up of self-directed communities called subreddits, has a number of communities dedicated to posting videos of strangers in public. Subreddit r/NPC_irl, started in 2017 focuses on the same behavior as the aforementioned TikTok trend of the same name, r/TookTooMuch, founded in 2015, is dedicated to filming drug induced breakdowns, and r/ImTheMainCharacter contains posts where the subject of each video is deemed to be full of themselves. Reddit is so interested in this kind of social media post that it has four subreddits dedicated simply to public freakouts: r/freakout, r/FreakoutVideos, r/FreakoutPublic, and finally r/PublicFreakout. Evidently, Reddit is rife with posts demonstrating strangers filmed in public. Blogs, such as the long-standing “People of Walmart” were an early iteration of documenting strangers in public. People of Walmart featured user-submitted photos of unsuspecting Walmart shoppers minding their own business shopping. All of these various forms fall under the umbrellas of non-consensual filming.

Receiving hate comments and death threats following a social media post as Lily did is, unfortunately, only the surface level of possible consequences. Videos of someone in public such as Lilly's could have much more legally serious repercussions. To law enforcement, such videos fall into the category of social media intelligence, or SOCMINT, a sub-category of open-source intelligence (OSINT). Social media intelligence encompasses a vast amount of digital material, including Facebook posts, tweets on Twitter, YouTube videos, Instagram photos and videos and comments, to name a few (Edwards, Urquhart 2015, 2). Anything that is publicly posted falls under this umbrella. Because these are posts made publicly, law enforcement deems them usable in warranting arrests. While police have used photos submitted by citizens for the purpose of law enforcement the past, the growing use of SOCMINT combined with adoption of machine learning programs, particularly facial recognition programs, by law enforcement, is cause for concern. This combination of social media intelligence and facial recognition has been used recently by law enforcement in the United States to arrest people on both ends of the United States political spectrum: Black Lives Matter protestors in 2020 and Pro-Trump insurrectionists on January 6th, 2021. These two instances make up the two main case studies this paper will discuss. Such arrests would not have been possible without a culture accustomed to posting in large volume on social media.

Social media thrives on connection. Digital publics like social media platforms have become a place to engage with community, share experiences, and hold public discourse (Staab, Thiel 2022). Adoption of social media happens as trends do; people join to connect with friends already on the platform (Trottier 2012, 68). Social media platforms offer opportunities to feed this desire to connect and encourage users to engage as much as possible. On Instagram, for example, there are platform affordances allowing users to check in through out their day with story updates. Facebook is even more direct with status updates. BeReal, a social platform that pings your device at a random point during the day, takes this to the next level, encouraging a check-in at a synchronized time. Instagram allows users to tag their photos with a specific location. I could post an Instagram story of myself writing this very paper and be so granular as to tag the exact dorm building I am in. Instead of calling a friend or even talking to someone in person about where I write my paper, I can post updates on my Instagram story. I could write on Facebook. I might even post my BeReal while writing this paper, if the timing worked out. We are awash with ways to post on social media.

But why? Why do social media platforms offer so many means of posting, and why do we engage with them on the level that we do? To answer this, look not to the users, but to the platforms themselves. Motivated by a desire to market more effectively to users, platform owners have created an ecosystem in which users are encouraged to disclose the personal data of themselves and others, opening the door to law enforcement to use social media posts to arrest people using tools designed by for-profit companies. Should we desire to regulate the use of social media intelligence, those legislative efforts must start with private companies or the cultures they encourage for the disclosure of data.

Two years ago I became deeply invested in the privacy of my data. Among my peers, there is certainly an awareness that the platforms and devices we use track us and form data profiles for us. This awareness is especially present on social media, where users will discuss shaping their algorithm, tricking the algorithm, or remark on how interacting with a certain post will mess up their algorithm. Anecdotally, I have even witnessed an awareness among my peers of how advertisers track you. Despite this, the darker consequences remain obscured or vague in my peer groups. Advertisers feed back targeted content, sure, but that seems like a low price for the free use of digital platforms. It is hard to say where this attitude arose from, but it certainly benefits those interested in collecting and selling data. The less concern users have for their data, the more they will disclose.

Witnessing the NPC trend on TikTok some years ago struck a chord with me. The behavior immediately struck me as wrong, but the reason behind this feeling was not apparent. It was digging into this behavior and the possible consequences that led me to social media intelligence. As my research continued, discovering that these socially hazardous videos could be legally hazardous as well was a terrible revelation. I have remarked through my research and writing of this paper that I feel both vindicated and disheartened. My suspicions have been proven but my fears realized.

In such a big world, why should a company care about my specific data? In discussions with peers I would often hear that sentiment expressed. The field of surveillance studies is vast and daunting. It feels disconnected from reality, and is difficult to parse through. I felt that if I were able, I ought to find a way to connect privacy concerns to the real lives of my peers. My hope is to present clearly my ideas to inform my peers and thereby reduce the harm that they may experience as a result of a data disclosure.

Before entering into my argument, I will first discuss a brief history of social media intelligence and the two main case studies this paper will deal with. I will then review the relevant literature to this topic, demonstrating how private profit-seeking platforms enable the efficacy of SOCMINT by creating an environment of normalized data disclosure. Platforms seek to expand data disclosure because data can be monetized through targeted marketing. I will discuss in detail the targeted marketing ecosystem, identifying it as the root of social media intelligence as an effective law enforcement tool thanks to its profit-motivated desire to expand means of data collection and comprehension. I will then connect these two sections, recalling information from the case studies and literature to illustrate my argument in full. Finally, I will identify interventions to reduce harm created by law enforcement use of social media intelligence, arguing for a cultural shift and legislative regulatory shift. But, before we go any further, I will first offer a primer for social media intelligence and a discussion of this paper’s case studies.

Social Media Intelligence (SOCMINT) Primer

The combined use of social media intelligence and facial recognition algorithms is not an imagined future; it is already here. social media intelligence is a sub-category of open-source intelligence, OSINT a law enforcement intelligence category focused on publicly available data. While in some use cases there is a level of citizen cooperation with police — people may submit photos to police directly — because the information is drawn from public sources, oftentimes SOCMINT is an unwilling or unknown collaboration between top down actors (law enforcement) and bottom up actors (social media users) (Edwards, Urquhart 2015, 6). This unwilling and unknowing cooperation is a result of the environment created by platform owners seeking profit through predictive advertising.

An early example of SOCMINT use comes from across the pond in Manchester, United Kingdom. Following the 2011 Manchester Riots, police crowd sourced photos of the riots from Facebook and Flickr and asked for public cooperation in identifying suspects (Edwards, Urquhart 2015, 9). The use of crowd sourced information and the need of public cooperation is a characteristic of SOCMINT without facial recognition. Because a mass amount of unstructured data is difficult to parse, law enforcement require a significant amount of manpower to sift through the photos and identify people. To manage this obstacle, law enforcement turn to citizens to assist them. This changes with the adoption of algorithmic facial recognition software by police. Still, as the January 6th insurrection investigation demonstrates, some citizens may still participate.

Facial recognition technology dates back to the 1960s, where the “technology” relied on hand coding of facial features and recording of those features in a database (Rainie et. al, 2022). The rise of machine learning facial recognition changes the paradigm entirely, requiring far less work on the human side, some might even say next to none. Facial recognition has become easier to use and requires less manpower to operate; it is thereby implemented more broadly. The Government Accountability Office reported that, as of July 2021, 42 federal agencies employing law enforcement officers had utilized facial recognition technology (Rainie et. al., 2022). This does not account for use of facial recognition by state or local police departments, but nonetheless demonstrates widespread adoption.

With some background on social media intelligence, I will now jump into SOCMINT case studies. The first case study I will be discussing is the January 6th investigation into the storming of the Capitol by right-wing dissidents, also called the January 6th Insurrection. In the hundreds of arrests following January 6th, which continue to pile up, social media posts were often cited in complaints against suspects (Morrison 2021). The siege was well documented. A number of participants willingly posed for photo and video, and some even posted themselves entering the Capitol (Morrison 2021). Yet, even those who did not pose willingly ended up caught in the lenses of cameras.

A group calling themselves “Sedition Hunters” have been cooperating with law enforcement to identify Jan. 6 insurrectionists (Gross 2021). These online investigators process photo and video from the insurrection and offer the information they glean to the FBI, who seem to be lagging somewhat due to the sheer volume of information. One such person identified by Sedition Hunters is nicknamed “CatSweat” for his Caterpillar construction company sweatshirt (Gross 2021). While his face was covered for the majority of the insurrection by way of sunglasses of a mask, the citizen investigators found a video of someone matching his limited description at Trump’s rally without a mask. After a run through facial recognition software, the investigators found a litany of information on him ranging from his occupation to his social media accounts (Gross 2021). Such information would not be as readily available on short notice without use of facial recognition; matching CatSweat to his social media accounts would have taken much more time.

These online investigators rely heavily on facial recognition software in their tips to the FBI. The FBI, themselves, admits to utilizing their own facial recognition for some cases, though they do not reveal to what extent it is used (Gross 2021). In a further attempt to downplay facial recognitions significance in their investigation, both groups are quick to say that the technology is only a stepping stone and that they take other measures to corroborate their findings (Gross 2021). Yet, still, thanks to facial recognition, a great deal of information is revealed without a great deal of manpower. It extends the power of investigators beyond their individual means of matching faces.

The use of facial recognition in combination with social media intelligence by cooperating citizens demonstrates the power of this technology. Exact details on FBI usage are not public, but, as the FBI admits to using facial recognition in a general sense, we can extend our analysis of citizen use to the FBI as well. It is only because there is so much documentation of the insurrection that the Sedition Hunters are able to find their targets; CatSweat would not have been identified if there were only photos of him wearing a mask. Yet, the sheer amount of photo and video posted to social media would be impossible to sift through without it, as would finding the identities of individuals who took part in the insurrection. Using facial recognition on these videos makes the intelligence they present more comprehensible, and therefore more useful to law enforcement agencies investigating Jan. 6. Without this comprehension, using social media intelligence would require more time and more people to analyze the information. Such intense time and personnel costs would limit social media intelligence in the same way that traditional surveillance is limited; by nature of time and money. Law enforcement agencies can only dedicate so much time to surveilling someone traditionally as it requires officers to physically be at a location or watching footage.

The second case study for the use of social media intelligence and facial recognition by law enforcement center around the Black Lives Matter (BLM) protests in 2020 following the murder of George Floyd. This case study presents the double-edged nature of filming public events and persons. In the case of police brutality, the ubiquity of cameras can be used to hold police accountable (Kelly, Lerman 2020). This idea is corroborated by Penn State Legal Review author Allison Amatuzzo, as she notes that citizen camera use changes the power dynamic between law enforcement and citizens, allowing citizens to enforce a level of accountability (Ammatuzzo 502). George Floyd’s murder was as public as it is because of the ubiquity of cameras. As I mentioned, this sword is double-edged, though, because protestors are often filmed by the same cameras used to hold police officers accountable.During the Black Lives Matter protests of 2020 following Floyd’s death, a number of protestors were identified and arrested following collection of SOCMINT and a use of facial recognition and object recognition software by law enforcement, both on a local and federal level.

Police investigators seeking to find out who was responsible for damaging police cars following a protest in Philadelphia turned to social media, and compared photos of their own to those posted by others at the protest. Using publicly posted photo and video, they were able to match Luke Cossman’s face to one of the alleged vandal’s thanks to a small cross tattoo under his eye (Shepherd 2020). Such a small detail is the exactly the use of facial recognition software. The other alleged vandals were identified as Steven Anderson, Fransisco Reyes, William Besaw and an unnamed teenager. Anderson and the teen were identified by association; they were Facebook friends with Cossman. Reyes posted a photo of himself wearing the same outfit on Instagram. Another individual, photographer Sammy Rivera, was identified by his skateboard that he handed to Cossman and other individuals damaging the car. Rivera and five others were arrested for allegedly vandalizing police vehicles on May 30.

This sequence of events echoes fears of many activists, including Rivera mere weeks before his arrest, that posting photos with protestor faces online could result in arrests (Shepherd 2020). Their fear, evidently, is not unfounded. Due to the number of cameras capturing the protest and damage to police vehicles, police officers were able to identify suspects in this case of alleged vandalism.

In Portland, around the same time, Kevin Benjamin Weier was arrested for attempted arson of a federal building. During a string of Black Lives Matter (BLM) protests in Portland, Oregon, federal officers actively monitored social media and livestream feeds to arrest protestors (Morrison 2020). Weier’s case falls into the livestream category. Federal officers were watching a YouTube livestream trained on Portland’s Federal Courthousethat depicted a protestor, whose face was obscured, take a flaming wooden board and place it against the exterior wall of the courthouse; a second protestor, Weier, whose face is visible to the camera, then moves the board to lean it against the wooden boards covering the courthouse window (Morrison 2020). Weier was arrested by federal officers shortly thereafter. The livestream was the only evidence cited as probable cause to arrest him (Morrison 2020). Unwittingly, the YouTube livestream was used in a police investigation.

Without social media videos of Cossman and associates, law enforcement may have never identified the alleged vandals. Without a YouTube that he happened to cross the path of, Weier would not have been arrested for placing the board against the federal building. Clearly, the number of cameras and number of users posting videos taken by said cameras affected whether or not law enforcement could arrest their suspects.

I choose to use two case studies whose subjects are so vastly ideologically separated is to draw attention to the fact that the use of social media intelligence and machine learning facial recognition software is not a partisan issue. Prior to the use of SOCMINT and facial recognition, carrying out the above cases would be costly, both financially and time wise. Facial recognition and social media intelligence allow police to operate as the federal officers did in Portland. Instead of having boots on the ground to observe people, law enforcement officers can simply scroll on social media or view a livestream, extending their reach and reducing the number of officers needed to conduct surveillance. The use of social media intelligence and facial recognition represents a paradigm shift in the power of police, allowing law enforcement agencies to stretch beyond the limits of manpower and time, enabling a greater reach for police surveillance than ever before. This novel form of surveillance is entirely unregulated. This power presents danger to everyone. If two so vastly ideologically different cases have been affected by it, anyone can be.

To further my point, let us examine a third case study. In a 2023 Human Rights Watch report, researchers found that authorities in Egypt, Iraq, Jordan, Lebanon and Tunisia have taken to using forms of SOCMINT in their policing of LGBTQ+ people (Human Rights Watch 2023). While the United States does not engage in targeting LGBTQ+ people on the level that these countries might, the use of SOCMINT by law enforcement to do so demonstrates the power, access, and intimacy granted by these practices. Human Rights watch documented numerous cases of entrapment on Grindr, an online dating application targeted towards gay, bisexual men, and transgender people, and Facebook, a platform based on social connections, where law enforcement created fake profiles to impersonate LGBT people (Human Rights Watch 2023). Despite this extra step, this practice hardly different from the activities by law enforcement during the January 6th investigation and the BLM protests.

Making an account is hardly a leap from watching a YouTube livestream. Many police departments already have social media accounts to begin with. If police in the United States were motivated, they could have caught insurrectionists using a similar tactic. In fact, one citizen did! Someone going by the name of Claire saw the insurrection on the news as she sat in her Washington D.C. hotel room, and decided to flip her dating app settings to conservative. By doing so Claire was able to identify a number of insurrectionists (Gross 2021). A motivated enough police officer could do the same thing. The FBI already fake accounts to infiltrate activist groups (Swan 2020). Any level of law enforcement could engage in the same tactic to the success that Claire did.

Clearly, these practices are available to law enforcement. Most have just chosen not to use them at the moment. All of these case studies, but particularly this third one, beg the question of what on social media ought to be considered public information. How much information that we public online should law enforcement have access to? Is information posted on the internet public as actions taken in real life are? Why is so much information available on the internet in the first place?

These case studies make clear that social media intelligence is only available and useful to law enforcement if two criteria are met. First, users must produce a massive volume of data that law enforcement can analyze. Second, the massive volume of data must be comprehensible. The unknowing “cooperation” of social media users, who post photo and video documenting their life and the world around them, fills that first criteria. Facial recognition, as we have seen, fills the second. Despite the importance of user involvement, the government nor law enforcement are not forcing social media users to post such content. They do not need to be so proactive. Users are already influenced by private companies interested in collecting data to engage in disclosure behaviors. The police do not need to create their own facial recognition tools, either. Rather, they can take advantage of an environment of data disclosure for their volume of data and pre-fab facial recognition for data comprehension created by private companies.

To summarize the issues so far, platform owners are motivated to create an environment of data volume and comprehension because that data can be sold to advertisers seeking information about their consumers. To do so, platform owners shape the affordances of their platforms, the functions available to a user on a given platform, in the interest of structuring and extracting the most amount of data. Location tagging on Instagram is an example of an affordance that increases the amount of structured data to be extracted. Platform owners are additionally motivated to encourage data disclosures from their users. The more data they can collect, the more data they will be able to sell to advertisers. Not only that, but higher volume of data allows for pattern identification, a necessary component of any kind of prediction. By encouraging personal disclosure, platform owners also indirectly encourage a similar disclosure by others. Not only that, but users who are comfortable disclosing their own information will feel less considerate about disclosing a peer’s information as one does when filming a stranger in public. This creates a norm of peer-to-peer surveillance, also known as sousveillance. This all serves to increase the volume of data.

To make this data comprehensible, platform owners turn to machine learning programs, also referred to as AI or artificial intelligence. These programs make judgements based on input data, identify patterns and make predictions based on those patterns. This increases data comprehension, thereby increasing its value to advertisers. The more comprehensible the data is, the more it is worth. Thus, platform owners are motivated to increase the efficacy of machine learning software.

This environment is where we find ourselves today. Platform owners have created means of collecting vast amounts of data. Through large volumes of data and comprehension of data, advertisers can shape what we buy and when we buy it; this is known as behavioral modulation. Yet, this is not the most harrowing of consequences of this situation. Platform owners and advertisers, through an act of gross negligence, have created an environment in which law enforcement can reach further than ever before. The police now use social media for probable cause, regarding the information posted as “open-source.” They use AI to pick out people in a crowd, identifying suspects with the inherent bias of machine learning programs. Machine learning programs are biased against minorities thanks to the data sets they are trained on. In the hands of law enforcement, this bias has drastic consequences. It is the volume of data and comprehension of that data that makes this practice possible.

With an understanding of the real-world application of facial recognition and SOCMINT, it is necessary to examine the existing literature around this topic to illustrate why and how private companies have created an environment of mass data disclosure, or datafication. It is this environment that allows law enforcement to use social media intelligence with the level of effectiveness that they do. Before we can understand this ecology, let us discuss the original motivator for datafication: marketing.

Predictive Analytics and Targeted Marketing

Traditional marketing has a problem: they do not know what the people want. Take Hoagies, Inc., a store that specializes in a large sandwiches known by various names such as subs, hoagies, heroes in various parts of the world, for example. Hoagies, Inc. has no clue who wants a hoagie. The ideal is of course of Hoagies Inc to show ads of hoagies to an audience who is already primed to purchase a hoagie. If they could target their advertising to that kind of individual then Hoagies Inc. would only have to spend money serving ads to people who are sure to be customers! It is this desire that leads to targeted marketing, where instead of the wide net cast by billboards, ads are served or not served to users on digital platforms based on a data profile connected to that user.

Mark Andrejevic, award winning author and Professor of Communications and Media Studies at Monash University Melbourne, breaks this down in his essay Exploitation in the Data Mine. He describes how the conceit of targeted marketing is that massive data sets will accurately predict the behavior of a consumer, allowing marketers to only spend money advertising products that they will definitely purchase (Andrejevic 2012, 71). This practice represents a chance to increase profits in two ways, both by cutting costs on ineffective marketing and by increasing the number of consumers out of a pool of possible consumers. Nick Couldry, widely influential Professor of Media, Communications, and Social Theory at the London School of Economics, and Ulises Mejias, award-winning Professor of Communication Studies at SUNY Oswego, discuss the economy of this practice in their book The Costs of Connection. Shoshana Zuboff, author of seminal work Surveillance Capitalism, aligns with their ideas, as quoted in Phillip Staab and Thorston Thiel’s work Social Media and the Digital Structural Transformation of the Public Sphere. In essence, data is a raw material of the internet that can be extracted through surveillance processes and converted into money via personalized advertising (Staab, Thiel 2022; Couldry, Mejias 2019, 72). The term “extracted” is used here to get away from the ideology that data simply exists for the taking. It is a revenue model that seeks to find more and more means of extraction to make more predictions. Bruce Schneier, a cryptographer, remarks on the extent of these predictions in his book, Data and Goliath. Schneier notes that, through predictions off of big data, marketers may know the toothpaste you will buy or the car you want; the data may know these things even before you do (Schneier 2016, 34). He describes an anecdote of a teenager who had recently become pregnant, and Target began serving her ads for baby clothes before she knew she was pregnant (Schneier 2016, 33). Target recognized a pattern in her buying habits as matching that of expecting mothers, and made the judgement to place her in that category. These kinds of judgements represent the power of predictive analytics.

Through predictive analytics, huge inferences can be made about what a person might do next. This allows targeted forms of marketing that pushes consumers to act in a way that marketers desire, whether it is through a targeted ad or through a just-in time coupon (Andrejevic 2012, 75, 132). Even someone with a disdain for seeing ads is likely to be interested in a discount for something they were considering buying. Nick Couldry and Ulises Mejias identify this same practice, describing the commercial interest for “data behaviorism” as modulation of behavior for profit (Couldry, Mejias 2019, 127). Being able to influence people’s buying habits is a truly powerful tool; being able to wield it would mean higher profits for marketers and manufacturers.The consensus on this spreads further, as Phillip Staab and Thorsten Thiel identify the same desire in their writing on the evolution of the public sphere. Staab and Thiel remark that the appropriation of data by surveillance corporations reflects an economy that seeks to measure, influence, and control behavior (Staab, Thiel 2022). Through predictive analytics, advertisers and platform owners stand to make a great deal of money by exercising influence over consumers. This is the profitable future imagined by marketers. Thus we see the beginnings of the environment I have described; this is the profit incentive that drives the expansion of data extraction and tools of data comprehension.

The practice of predictive analytics is a marketer’s dream, but they need a stable flow of data to monitor consumers. The more data gathered, the more powerful a statistical pool is (Andrejevic 2012, 74). Not only that, but the data extracted is only as useful as it is comprehensive, Couldry and Mejias concur (Couldry, Mejias 2019, 117). The amount of data collected must always increase and how understandable it is must always increase. As Staab and Thiel, in parallel with Couldry and Mejias, remark, since capital imperatives are involved, the machinery of data extraction must constantly expand (Staab, Thiel 2022; Couldry, Mejias 2019, 33). In this expansion we then have two clear goals: volume and comprehension.

Digital Platforms as a Means of Increasing Data Volume

In order to protect people’s sense of freedom, surveillance must be repackaged and disguised; it is through lapses in our sense of being watched that the tools of surveillance are allowed to expand (Couldry, Mejias 2019, 10, 99). If surveillance tools were packaged into, say, the way in which people interact with friends, users would be much more inclined to allow surveillance. But, before we can dive into the expansion of modern surveillance tools, we must first understand a crucial surveillance theory: Michel Foucault’s Discipline Society and Panopticism from Discipline and Punish. His theory of Panopticism is the form of surveillance preceding our current state of sousveillance. In order to understand how we arrived in a state of sousveillance we must understand its predecessor.

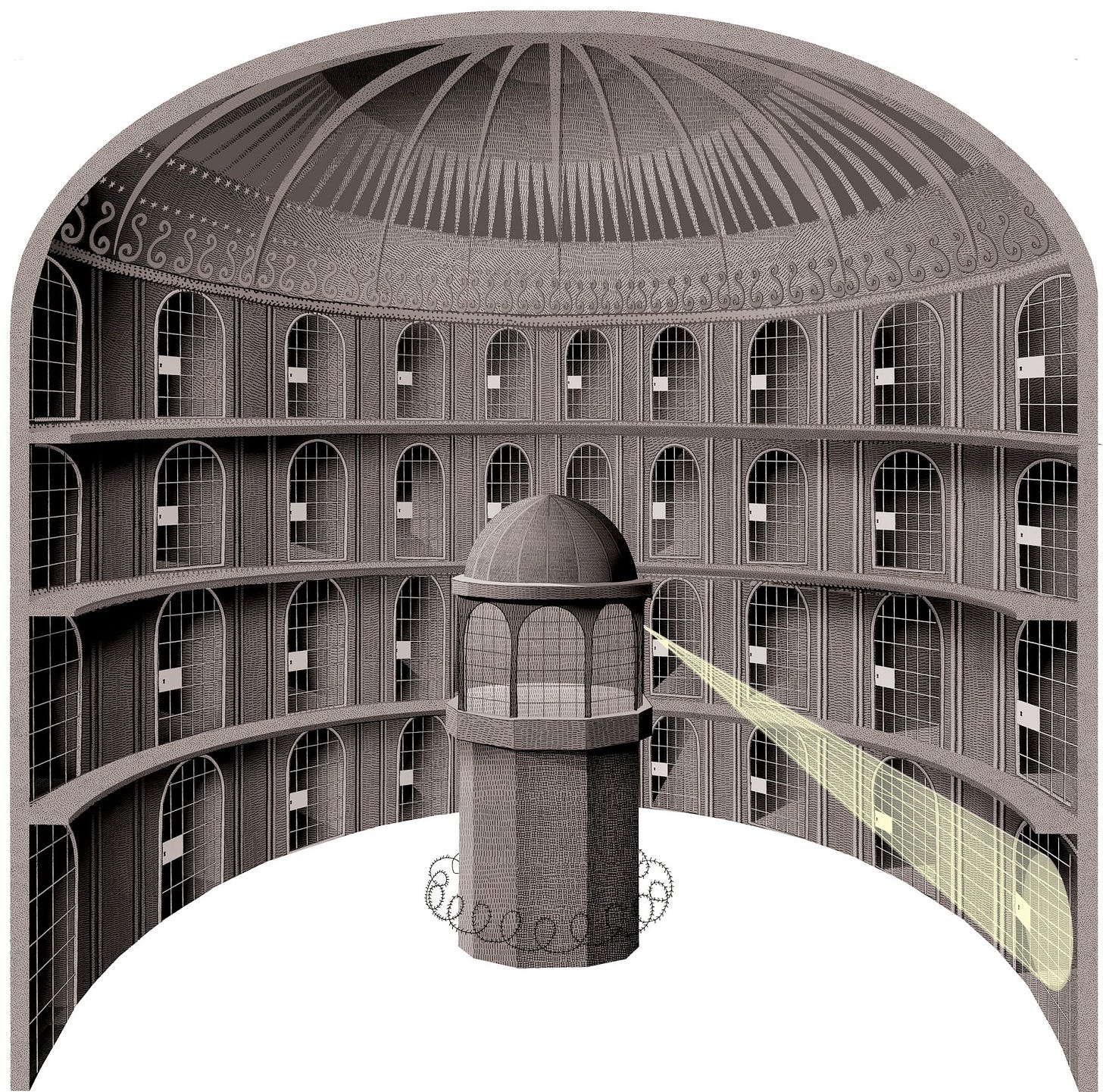

Foucault, a renowned philosopher and political theorist, draws his theory of Panopticism from Jeremy Bentham’s panopticon, a hyper efficient prison built as a cylinder with a central guard tower able to observe every cell (Figure 1). The observation windows of the guard tower are mirrored, making it impossible for the prisoners to know whether or not they are under observation. Bentham argues that this setup means that prisoners will act as if they are under watch at all times (Foucault 1975, 200). In Discipline and Punish, Foucault identifies that for citizens to follow the regimented life demanded by the industrial age, they must constantly be under observation to ensure they independently maintain their regimented lives in the interest of the institution. Discipline must come without excessive force, but rather through observation and shaping of behavior through that observation (Foucault 1975, 199). When someone believes they are being observed, they will act as they ought to. If they constantly believe they are being observed, they will constantly act as they ought to. In Panopticism, observation and power must be visible but unverifiable — we must see cameras in public, but be unsure if they are recording us — so that the subject is in a conscious and permanent state of visibility, allowing power to shape and mold them automatically (Foucault 1975, 201). Here we see a piece of Couldry and Mejias’s idea of hidden surveillance; it’s obscurity allows power to mold behavior. Panopticism’s mode of observation is limited to structures, lacking the ability to re-package itself. In the digital age, however, surveillance has moved beyond structure, into a more liquid amorphous form, one that can be both disguised and repackaged.

This liquid form is described by Zygmut Bauman, renowned surveillance theorist. Bauman describes a new kind of worker control that moves beyond Bentham’s structured panopticon, one that is disguised as Couldry and Mejias propose. He writes about the synopticon, wherein we are encouraged to carry our own personal surveillance devices like snails carry their homes (Bauman, Lyon 2013, 59). The job of surveillance becomes a job of the subordinates, who are tasked with self-disciplining. We are encouraged to keep our surveillance devices, which could be likened to smart phones or social media profiles, in good condition, monitoring our lives (Bauman, Lyon 2013, 58). Think of the number of ways we are encouraged to use our smart phones each day. Its all-in-one nature encourages constant use as a watch, a map, a calendar. Social media, more specifically, encourages status updates, engagement through likes, and notifications about posts. Gilles Deleuze, French metaphysics theorist, has a parallel idea that he refers to as the Society of Control. This separates from Foucault’s Discipline Society, which is structured and bound to buildings; Delueze’s Society of Control is comprised of vast open networks (Deleuze 1992, 6). We may travel on the internet infinitely, but within the bounds of platform owners.

Here we see a representation of Couldry and Mejias’s proposal for surveillance to be disguised and repackaged. New affordances or platforms can be new forms of surveillance, and hide that they are observing their users by disguising surveillance tools as social interactions. Both Bauman and Deleuze acknowledge that a state of increased connection, like the one offered by social media, could be used as a form of surveillance. Social media platforms encapsulate the new liquid tools of surveillance referred to by Bauman and Deleuze. They can be anywhere, and seemingly extend infinitely, but operate entirely within the bounds and goals of platform owners. These platforms are designed with specific goals in mind: to present a structure of the previously unstructured data produced through social interaction. Platforms are structured online spaces that offer social services of various sorts, generally operating without explicit payment; the payment comes in the form of collected data (Couldry, Mejias 2019, 25). That story post I could make, tagging my dorm building location, can be sold by Meta, Instagram’s parent company, to a data broker, who then sells that information to advertisers. In return, I get to use Instagram for free! As a result of this data disclosure, I might get ads targeted to college students, perhaps even as granular as ads that focus on the kind of student who lives in my dorm building.

Through platforms, the open ended interactions of social life can be packaged and structured for economic extraction. That photo on my Instagram story, Instagram posts about a holiday vacation, family photos uploaded to Facebook, movie opinions shared on Twitter (not calling it X), can all be farmed and sold for profit. Mere daily activities of life are turned to have value extracted out of them (Couldry, Mejias 2019, 31). Just as Bauman refers to monitoring becoming the job of the subordinate, Couldry and Mejias refer to this activity of social life as unpaid labor due to its capital generation for platform owners (Couldry, Mejias 2019, 58). Platform owners have found a means of extracting profit off of pre-existing behaviors, socializing. They structure these interactions, collect the data, and spit it back in the form of ads. If platforms can attract enough users, the infrastructure of data collection can quantify activity on a massive scale. The more users, the more interactions can be datafied, scraping more information. The more information can be scraped, the more money there is to be made through the sale of data to advertisers.

This is the first step towards the high data volume marketers desire to feed their predictive analytics. Social media is the tool by which such a volume of data can be collected. Motivated by the profit incentive in predictive analytics and targeted marketing, platform owners seek to capture the data exhaust of social interaction, and structure it to be used effectively. Next, they need to form a massive volume of data by attracting users and rationalizing the disclosure of their personal data.

Rationalizations of Datafication — Roping Users In

In order to form a volume of user data, users and their activities must be folded into modes of data collection. Nick Couldry and Ulises Mejias coin this process as datafication, a capitalist practice to scrape more and more of life into quantifiable terms, (Couldry, Mejias 2019, 5). Through datafication, social interactions become enfolded into a capitalist domain of extraction. Social media is a perfect example of this, where friendships become quantified into followers like counts, and comments. Couldry and Mejias refer to a similar phenomenon discussed by Bauman and Deleuze, in which more and more of life is datafied, made into data (Couldry, Mejias, 2019, 12-13). Bruce Schneier, a cryptographer, agrees in his writing Data and Goliath, where he remarks that it is impossible to avoid interacting with computers in our society (Schneier 2016, 17). In this way, our lives echo Deleuze’s society of control; leaving the surveillance dragnet is not an option. Computers are embedded in daily life so much so that they are a seemingly intrinsic part of it, inseparable from day-to-day tasks.

Schneier drily summarizes this feeling in saying that your smart fridge is not a fridge with a computer in it, but a computer that also keeps food cold (Schneier 2016, 15). Couldry & Mejias and Schneier agree that adoption of social media amplifies this issue (Schneier 2016, 29; Couldry, Mejias 2019, 45). While a smart fridge is only a part of life in relation to food, social media succeeds at quantifying a constantly active area of life. The practice of datafication described by Couldry & Mejias, paralleled by Schneier, Bauman, and Deleuze, is the way in which advertisers begin to rope users into a datafied social life. Because advertisers need mass amounts of users to produce mass amounts of data in order to fulfill their revenue model, they are motivated to encourage more and more users to engage with social media.

Scholars agree that people engage with social media out of a desire for uniqueness, a calculated public image (Trottier 2012, 8; Staab, Thiel 2022). This desire is encouraged and augmented by social platforms and their affordances. Profile pictures, status updates, profile bios usernames, the way one chooses to caption their photos, and tags, are all examples of such affordances. This behavior forms an ideology of uniqueness on social media that serves to further narrow data profiles. Couldry and Mejias identify this ideology as one of several produced by the culture machine of social media. They describe the ideology of connection as requirement; ideology of datafication (everything must be datafied); marketing ideology of personalization (you want things tailored to you!) (Couldry Mejias 2019, 16). Through personalization, users offer increasingly granular information about themselves, a desirable behavior for platforms interested in personal data.

Spreading these ideologies on social media is a quaint task; it only takes a minority to spread them. Drs. P. K. Masur, Dominic DiFranzo and Natalie Bazarova, researchers specializing in digital media, social media, and human behavior support this idea in their work Behavioral Contagion on Social Media. They found that behavioral norms can be shifted on social media with very few people. Indeed, with just twenty percent of posts reflecting a new behavioral norm, Masur et. al. found that users would shift their behavior to abide by that new norm in their own activity. (Masur et. al., 2021). For example, if twenty percent of users began posting selfies with a certain face, that would be enough to shift behavioral norms toward that trend. Harnessing this towards data disclosure behaviors follows in the same manner. Only twenty percent of users need use location tags, or take part in posting NPC videos for that behavior to catch on. With just a small number of users, ideologies connected to data disclosure can be spread rapidly.

Through these ideologies, platform owners can encourage disclosure of personal information to be fed into machine learning programs. Machine learning can then make such data comprehensible. This comprehensible information is then sold to advertisers for profit. Because there are capitalist motivations at work here, there is a constant need to expand, both in terms of efficiency of technology and in terms of the amount of datafication (Staab, Thiel 2022). With this, then, platform owners are motivated to introduce more and more ways for users to disclose information through their platforms. The more information can be disclosed, the more profit they stand to make. The more users disclose, the more other users will disclose. It is a self-perpetuating pattern.

This brings us to our current state, observed by several scholars as a reliance on these technologies to socialize and move about our daily lives that has gone so far to the point where not interacting with them is tied to an atrophy of social capital (Trottier 2012, 22; Andrejevic 2015, 74, Schneier 2016, 15). Because of this social risk, users feel pressured to join, further expanding the volume of data. The desire to maintain social capital is just one of several reasons that users engage with social media. Some users may even find that photos or videos of them already existed on social media prior to their joining (Trottier 2012, 68). Once on social media, users ultimately end up disclosing their own information. Gordon Fletcher, Marie Griffiths, and Maria S. Kutar note in their study “A Day in the Digital Life” that individuals constantly allow pieces of their personal data to be collected (Fletcher, et. al. 2011, 2). Dr. Ralf De Wolf, addresses this same idea, noting that people balance how much they want to disclose based on the cost/benefit trade off (de Wolf et. al. 2023, 154). This trade off is not one made fairly, as users feel a pressure to engage with a service that operates on this bargain. Daniel Trottier observes this, noting that users maintain a trade off between ensuring privacy and garnering social capital by disclosing aspects of their lives (Trottier 2012, 64). Fletcher, Griffiths, and Kutar do not identify this same trade off as Trottier, however. Instead, they focus on the trade off for immediacy/convenience (Fletcher et. al. 2011, 3). Whichever way, because these platforms are embedded in social life, the “choice” to use them is a pressured one, making the trade off hardly fair. Before further illustrating the privacy practices of users and the consequences of them, I will first discuss definitions of privacy.

A Question of Privacy

The concept of privacy has been a topic of philosophical discussion going back to Aristotle, who discussed the difference between the polis, the public sphere of politics and activity, and oikos, the sphere of family (Roessler et. al, 2023). Ironically, Oikos™ is now the name of a greek yogurt brand that uses invasive trackers on their websites (Danone North America 2023). This distinction for Aristotleans derives from a social ontology that makes it natural for certain things to be private and other things to be public. Liberal philosophers such as Thomas Hobbes, John Locke, and John Stuart Mill define the public as the appropriate realm of government authority while the private is the realm reserved for self-regulation (Roessler et. al, 2023). This bears similarity to Aristotle’s view, as they both carry importance with how government is involved in private spaces. These ideas form the basis for later conceptions of privacy into the digital age, where a great deal of concern is given to how a government might be invading the privacy of its citizens.

Informational privacy, as in privacy regarding information about oneself, narrows this idea further. The first definition of informational privacy was coined by Samuel Warren and Louis Brandeis as a right to “be let alone” (Roessler et. al, 2023). This definition of privacy can be named “non-intrusion privacy” for our purposes. Though this idea initially arose in a dissenting opinion following the wiretapping case, Olmstead vs. United States, it has since been adopted into U.S. Supreme Court precedent, in Katz vs. United States, making it a foundational legal ideal regarding privacy in the country ("Expectation of Privacy," Legal Information Institute). Katz vs. United States describes a means of discerning if an individual’s privacy has been breached by testing if the individual had a reasonable expectation of privacy. An individual is deemed to have a reasonable expectation of privacy if, and only if, i) the individual has exhibited an actual subjective expectation of privacy and ii) the expectation is one that society is prepared to recognize as reasonable ("Expectation of Privacy," Legal Information Institute). It is important to note that this legal precedent was set in 1967, prior to the internet. I must also note that the language of Katz v. United States only offers privacy to the subject insofar as their society agrees that they ought to have privacy, removing agency from the subject. Again, we see here that the concern for privacy is with regard to the government; both Olmstead v. United States and Katz v. United States were cases concerned with government intrusion.

Such a conception of privacy is far too limited for the state we find ourselves in, where private companies have access to an enormous amount of personal information about the population. Herman Tavani, Ph.D., Professor Emeritus of Philosophy at Rivier University and author of a number of books on ethics in the digital age, agrees that the United States’ conception of privacy is lacking. In his writing, Philosophical Theories of Privacy, Tavani notes that the ideas of privacy from Warren and Brandeis underpinning this conception cause privacy to be confused for other concepts. The view that originated from Warren and Brandeis, according to Tavani, confuses the right to privacy with the condition of privacy, ie when one is alone (Tavani 2007, 5). Tavani argues that being let alone refers more to a status rather than a right one has. The flaws in these foundational ideas, as Tavani points out, limit our present day privacy rights.

Tavani offers an alternative called Restricted Access/Limited Control, abbreviated to RALC. RALC focuses on the differentiation between the concept of privacy and the justification and management of privacy. Someone has privacy when, according to Tavani, when they are protected from intrusion, interference, and informational access by others in a given situation (Tavani 2007, 10). This is the restricted access part of RALC, and covers both physical intrusions and digital intrusions on privacy. The limited control arrives in the justification and management of privacy, where Tavani believes that someone only needs a certain level of control over their privacy. They define that control is only needed with respect to choice, consent, and correction (Tavani 2007, 12). That is, they need control to choose between situations that offer different levels of privacy they desire, the choice to consent a waiver of privacy, and the ability to manage and amend their information. This conception of privacy hands over much more agency to the subject rather than the viewer, as Katz v. U.S. does. While the United States does not have such subject-oriented privacy legislation, the European Union does, known as the General Data Protection Regulation (GDPR).

The GDPR contains the guidelines for data protection in the European Union. The E.U. has sufficiently outpaced the United States with their privacy regulation, relying on regulation built out in the 21st century instead of the mid-20th. The GDPR lays out fairly stringent rules that force data collection to operate under specific guidelines. The GDPR focuses on transparency, a limitation of purposes for which data can be processed, a minimization of data, accuracy, limitations on storage, and confidentiality (“Official Legal Text,” General Data Protection Regulation). The E.U.’s definition of privacy is far more stringent than the United States. Instead of an assumption of privacy based on reasonableness, the E.U. focuses on unambiguous consent of the data subject for data processing within a narrow set of reasons, most of which have to do with legal obligation or life or death situations (“Official Legal Text,” General Data Protection Regulation). This would mean that every interaction where data is collected and processed must be done with the consent of the data subject; not only would this eliminate non-consensual filming, it would make collecting data from social interactions that much harder. As discussed above, concern for privacy is a key factor in determining privacy management. If people were truly informed of how their data was used, they might not consent. The E.U. puts forward one more key point in their GDPR having to do with access to data. Under the GDPR, data subjects have the right to access, erase restrict use of, rectify, or entirely object to their data (“Official Legal Text,” General Data Protection Regulation). This level of control and restricted access aligns with Tavani’s conception of privacy; it is far more comprehensive and built for the digital age. Aside from legislative lag, the United States lacks such regulation for one key reason.

If everyone had the kind of control over their data provided by the GDPR, it would not be profitable anymore. It is the very basis of the predictive analytics revenue model that data is an exclusive commodity bought with money. If every data subject had access to it, or could restrict the processing of that data, they could sell data to advertisers themselves. It is the economic model of advertising that keeps our legal system shackled to a legal precedent set last century. And it is that shackling that allows the government to use social media photo and video to arrest people that they otherwise would not have had the information to do so. Without legislation and users have to define their own privacy practices, influenced by platform owners seeking to make a profit.

Fletcher, Griffiths, and Kutar categorize these user-defined/platform influenced practices into privacy archetypes, demonstrating the different levels of trade off that de Wolf and Trottier refer to. Fletcher, Griffiths, and Kutar note three achetypes: fundamentalists, who give inaccurate, incomplete data, pragmatists, who balance risks to privacy by what benefits they receive, and the unconcerned, who have little to no anxiety and give the level of information required (Fletcher et. al. 2011, 3). While these exact archetypes are not noted by Trottier or de Wolf, the concept of differing levels of information disclosure holds true in their writing.

Exactly what determines these archetypes is explored by de Wolf et. al in their 2023 article “Predicting Teens’ Privacy Management and Attitude towards Data Protection on Social Media.” They studied different factors for determining privacy management and attitudes towards data protection in teens. Ultimately, de Wolf et. al. discovered privacy concern to be the strongest predictor, followed by perceived data control and privacy literacy (de Wolf et. al. 2023, 158). De Wolf’s very detailed examination of these factors are not explored by the other scholars cited here, though both Trottier and Fletcher et. al. note that concern is a major factor in determining privacy disclosure. For Trottier it is that the concern for loss of social capital exceeds privacy concern, while for Fletcher et. al. it is that privacy concerns do not outweigh concern for immediacy (Trottier 2012, 22; Fletcher et. al. 2011, 3). In sum, concern about privacy significantly positively relates to privacy management. Because concern is significantly related to privacy management, platform owners are able to encourage disclosure by disguising data collection as social interaction. If users do not know the dangers of or that they are engaging in disclosure at all, they remain unconcerned for their privacy and will continue to take disclosure behaviors.

A lack of concern about ones own privacy, which coincides with a lesser privacy management, can lead to a lack of concern regarding the privacy of others. Allison Amatuzzo and Daniel Trottier discuss parallel ideas in their work where close observation of others accounts or “stalking” becomes normalized on social media, leading to what Amatuzzo refers to as sousveillance (Amatuzzo 2019, 491; Trottier 2012, 79). If you would not mind posting a video of yourself on social media, you are less likely to be concerned about posting a video of someone else. Sousveillance, as Amatuzzo describes it, is the citizens ability to capture and disseminate recording to a wide network (Amatuzzo 2019, 492). In this state, citizens are watched not only by the government, but by their peers. Take our case studies, for example. Police do not have to work to watch citizens because a state of sousveillance allows them to merely observe the footage offered by citizens of their peers. While Trottier reflects further on the causes of this phenomenon than Amatuzzo does, they both agree that social media blurs the line of public and private (Trottier 2012, 61; Amatuzzo 2019, 502). With all of this, it is easy to see how we could arrive in a state where January 6th insurrectionists are filming each other or YouTube livestreams are trained on Black Lives Matter Protests.

This blurring of public and private aligns with the work of Phillip Staab, Professor of Sociology at Humboldt University, and Thorston Thiel, Professor of Democracy and Digital Politics at the University of Erfurt, who describe a shifting of the public sphere to digital that our definition of public and private have yet to catch up with (Staab, Thiel 2022). Trottier describes the root cause of this shift and coupled sousveillance as a desensitization to being watched and a shifting understanding of what information is private (Trottier 2012, 79). According to Trottier, this normalization/desensitization occurs because users have the ability to watch others while being watched themselves. It is the power of observation that makes these platforms feel more democratizing. Moments of exposure are built into social platforms, and made to seem less impactful by allowing transparency into the exposure of others (Trottier 2012, 4). This sentiment is echoed by Mark Bartholomew, a law and online privacy professor at The University of Buffalo. Bartholomew summarizes Trottier’s point by remarking that if you are constantly under watch it feels more ok to point your camera at someone else (Lim 2023). In interviews with Facebook users, Trottier found that while college students regarded “Stalking” as invasive, they left the responsibility of the poster for leaving private information public (Trottier 2012, 67, 77). Management of this online image, though, requires a profile. Some users, Trottier found, discovered they had a digital footprint without ever posting themselves or having an account (Trottier 2012, 68). In order to remove or shift the public image of this digital double, people had to make an account. This forced engagement, coupled with discussions of datafication of social life and noting the motivation to maintain social capital, undermine the logic of many users Trottier interviewed. If users are to be truly responsible for their online image, it should be entirely up to them if photos are on a platform at all. This is not, however, the basis on which the United States operates legally or ideologically. Our definitions of privacy do not account for the phenomenon of sousveillance.

These attitudes and behaviors detailed above demonstrate a success by platform owners in encouraging the disclosure of personal information. Because interpersonal surveillance, or sousveillance is normalized, so too is the disclosure of personal information and the surveillance of users, and vice versa. With these ideologies rooted into user behaviors, platform owners have plenty of data to feed to machine learning and subsequently advertisers, making a profit by doing so. These attitudes also demonstrate the origin of the behavior this paper centers around, filming strangers in public. The linking of interpersonal surveillance, sousveillance, with surveillance of the self is key; it is why the behavior of documenting a stranger in public is normalized. Why should someone whose privacy is constantly violated, someone who knows that everyone else’s privacy is constantly violated by private companies, care for the privacy of others in public?

As demonstrated here, platform owners have succeeded in achieving their volume of data. Now I turn to the second goal required by predictive analytics: comprehension.

Machine Learning for Comprehension of Data

Comprehension of mass amounts of data can be achieved through machine learning, but the judgements made by these technologies are obscured and biased due to the data sets they are trained on. Frank Pasquale details this in his book, Black Box Society, where he concurs with Andrejevic’s stance that machine learning/predictive analytics are used to make extraordinarily large inferences about peoples’ lives (Pasquale 2015, 8). Pasquale additionally parallels Lilian Edwards, Professor of Law and Innovation at Newcastle University, and Dr. Lachlan Urquhart, Associate Professor in Technology Law and Human-Computer Interaction at the University of Edinburgh, with regard to how data must be structured with the advent of machine learning. Because machine learning programs are designed to identify patterns, the data fed into them does not need to be structured in the same way that classic surveillance needed to be (census, exact numbers counter). Instead, the data can be unstructured (Pasquale 2015, 20; Edwards, Urquhart 2015, 26). The possible consequences of these kinds of judgements are the meat of Pasquale’s work.

Across the literature of this subject, scholars agree that the way in which machine learning algorithms operate are opaque, even to the engineers who design them (Couldry, Mejias 2019, 124; Pasquale 2015, 9; Trottier 2012, 14). These machines are designed in such a way that it is impossible to tell what or how information that is fed to them is used. Because of this, the conclusions and judgements they make are also opaque. However, scholars agree that the data sets used in machine learning programs are inherently biased. The claim of these systems is that they are judging individuals neutrally, but Couldry and Mejias note that there is no such thing as neutral data; therefore the judgements cannot be neutral (Couldry, Mejias 2019, 147, Pasquale 2015, 35). This is because human bias is embedded into data sets. AI Facial recognition technology, a machine learning software, has particular difficulty differentiating the faces of people of color. Pasquale notes that this is perhaps due to the origin of many of these technologies, Silicon Valley, which is not known for its diversity (Pasquale 2015, 40). Even separated from Silicon Valley, data sets are inherently backward looking, resulting in a largely conservative view that skews towards inequality (Pasquale 2015 , 38; Couldry, Mejias 2019, 137). As discussed, this bias can be seen in facial recognition software, where data sets used to train such programs contain more white male faces, leading to inaccurate matching of black and brown faces.

Pasquale’s discussion of exact consequences of this situation remain mostly theoretical, but his idea that faulty, biased, or destructive judgments will result from this biased data is carried by Couldry and Mejias as they note the inequitable treatment of poor and POC people as a result of such judgements (Pasquale 2015, 38; Couldry, Mejias 2019 144). Couldry & Mejias lay out a number of consequences, including job placement, credit score, education, and expectation of criminal activity. Their two key points, I believe align with Pasquale’s broad strokes. First, they note that the old rationale of giving more favorable terms to the poor or disadvantaged has been replaced with the idea that credit should only be given based on previous credit history (Couldry, Mejias 2019, 147). Second, Couldry and Mejias remark that if patterns in historical data are considered reliable proxies for future events than preventative actions may be taken, and their necessity may never have been demonstrated (Couldry, Mejias 2019, 148). It is in how to deal with this that Pasquale departs from Couldry & Mejias. Couldry & Mejias identify this proxy logic as the key issue, remarking that transparency, which Pasquale believes to be the key solution, is pointless (Couldry, Mejias 2019, 147; Pasquale, 35). Pasquale believes that if we could understand how these systems work, then we could game the system; it is Couldry & Mejias’ belief that the system should not exist at all. Pasquale sees the issue of machine learning is its opacity, that if the ways in which the machines operated could be known then we could influence the decisions they make and thereby take control of our lives once more. Couldry and Mejias see this as unnecessary. It is rather that such algorithms should not make decisions as they operate on a kind of proxy logic that, as mentioned above, results in action being taken for predicted events that may never occur. This, they argue, is the core issue of machine learning programs and their power. Yet, because advertisers want these kinds of predictions, they are motivated to make technologies that will offer them; offer comprehension of data that is.

With this understanding, we begin to see the method by which advertisers can achieve one part of their dream. Motivated by the profit incentive of predictive analytics, advertisers and those who would sell them data, data brokers and platform owners, are motivated to create a means of comprehending mass amounts of data. Machine learning offers this comprehension; marketers and data brokers are thereby motivated to improve upon machine learning programs. These programs are birthed from and improved by the private sector because of the profit incentive represented by predictive analytics and targeted marketing.

Case Studies

Now to return to The Sedition Hunters, citizen investigators working with FBI and other law enforcement bodies to identify January 6th insurrectionists. These investigators would not be able to operate without the sheer amount of social media posts of suspects. These posts were made on sites interested in scraping data for sale and profit, such as Instagram, Twitter, and Facebook. So many photos posted would not be posted if not for a normalization of the disclosure of personal information in the digital world. Insurrectionists posed for photographs in some instances, or went so far as to post their own photos documenting their actions (Morrison 2021). Evidently, these people had some interest in publishing their information online. Adam Johnson, who became known as “Podium Guy” following a viral photograph of him carrying Nancy Pelosi’s lectern in the Capitol Rotunda, requested that his photo be taken and later bragged on social media that he had “finally become famous" and had “broke the internet” (Jackman, 2022). Instance such as Podium Guy demonstrate Trottier’s observation that social media operates as a means of maintaining and augmenting social capital; Johnson desired to be famous and increase his social capital.

There is an interesting tension here, though, as clearly some protestors desired to conceal their identities. CatSweat, our previously discussed case, had his face covered for the majority of the insurrection. Yet still, CatSweat was photographed numerous times, and in one instance his face was shown. A decreased concern for one’s own privacy leads to a decreased concern for the privacy of others (Trottier 2012, 79, Amatuzzo 2019, 491, Lim 2023). Adam Johnson’s case and the number of January 6th insurrectionists who opted to pose for photographs demonstrate a lack of personal concern for privacy. Following this train of reasoning, CatSweat was photographed because other insurrectionists did not care for their own privacy and therefore did not care about CatSweat’s privacy. Despite his demonstrated desire to conceal himself from exposure, the mechanisms of platforms and the ideologies attached to them made it impossible for CatSweat to stay private.

As we have discussed, facial recognition is a key tool in making arrests for the Sedition Hunters. The literature reviewed above demonstrates the origin of these technologies. Their use by the Sedition Hunters further demonstrates this, as citizen investigators would not have access to government software; they only need to access software produced by private companies.

Arrests of January 6th insurrectionists through SOCMINT is not the culmination of government programs to increase surveillance. It is rather law enforcement, and some enthusiastic civilians, taking advantage of an environment created by private companies interested in selling data for profit.

The same holds true for our other area of case study: the Black Lives Matter (BLM) protests following George Floyd’s murder in 2020. This case study parallels the issues demonstrated by the January 6th investigation, as well as a direct illustration of how the affordances of platforms can be used by law enforcement.

In Kevin Benjamin Weier’s case, a YouTube livestream was the only evidence used to warrant his arrest. He just happened to be on camera, much like CatSweat was. His arrest, like CatSweat’s demonstrates the impossibility of privacy when other people do not care for their own. Weier’s case also demonstrates law enforcement’s knowledge of the information pool that social media represents. Remember that federal officers were monitoring social media feeds for illicit activity. This practice stretches the power of law enforcement far beyond the previous cost and manpower stoppers of traditional surveillance. When law enforcement can passively monitor social media feeds as a network of cameras, they are afforded a reach that would previously have cost the time of a great number of officers and a great deal of money. Instead of placing officers on the ground, the feds could simply watch YouTube livestreams. This not only costs less money, but is a more efficient form of surveillance and control.

Recall Foucault’s philosophy of power in his definition of panopticism — power that is visible but unverifiable in its activity. One can never be sure they are not under watch. Even if we are aware of a camera, is there anyone watching the screen? It’s hard to say. Yet, because people like Weier get arrested based on social media posts, we must assume we are under watch and act accordingly, as Foucault suggests. The system of surveillance, though, is far grander than Foucault’s definition, stretching to Deleuze’s conception; surveillance is no longer confined to security cameras or specific structures, but is instead all around us. This amplifies the visibility and unverifiable nature of surveillance power, thereby increasing the level of power itself.

Luke Cossman and others were identified allegedly setting fire to police vehicles in Phildelphia. Just like CatSweat, Cossman and associates were identified in someone else’s video, not their own. Their arrests, though, demonstrate more explicitly the affordances designed for datafication on social media as dangerous when applied to law enforcement. Luke Cossman, was Facebook friends with Steven Anderson.

The friends feature on Facebook is a clear example of a way of scraping more information into social platforms so that advertisers can create networks of consumers for use in targeted marketing. For the police, it represents a web of possible accomplices. This direct link between for-profit practices and police usage clearly demonstrates that law enforcement are merely using an existing environment, not creating one where SOCMINT is usable.

Rebuttals

These case studies demonstrate three prongs of SOCMINT’s usability and its connection to the datafied environment created by platforms. First, the January 6th investigation demonstrates both a disregard for personal privacy and the connection of that attitude to a disregard for the privacy of others. The arrests carried out as a result of this attitudes solidify their consequential nature. As we have discussed, such attitudes are encouraged by platforms in order to bring in more personal data to sell to advertisers. Thus we see a link between this ecology of targeted marketing and the use of SOCMINT to arrest people. The BLM arrests demonstrate the power of social media intelligence, its effectiveness, and the relation of arrests directly to platform affordances designed to quantify social interaction. Weier’s case shows how law enforcement already uses and are aware of SOCMINT, and can use it without great expenditure. Kevin Anderson demonstrates how tagging, an affordance designed for social quantification, can be used by law enforcement to arrest a suspect.

It is clear, now, that law enforcement would not be able to use SOCMINT effectively as they have without the environment created by social media platforms and the ideologies propagated by the companies that run them. In the pursuit of profit through targeted marketing, social media platforms have negligently created an environment in which we citizens monitor each other and submit documentation of that monitoring as a means of gaining social capital. The normalization of this behavior is born out of a normalization of disclosing one’s own information. In such an environment of unrelenting data disclosure, law enforcement agencies are able to use social media to investigate or even actively monitor a population without sacrificing manpower and time, the previous stop-gaps for police surveillance. What took the Stasi of East Germany one hundred thousand officers and five hundred thousand informants now only requires a few officers watching a YouTube livestream or feeding social media posts to a facial recognition algorithm.

The counter-argument to the concerns I have raised in this paper comes in four forms. Someone countering the ideas I present here might first take a technological stance, remarking that if a police officer were able to see a post on a public social media page that collecting data from a volume of posts from that same page would also be acceptable. This argument assumes that digital public spaces are the same as physical public spaces; that seeing a public post is the same as seeing someone in a physical public space. I will address this assumption shortly. First, I will respond to the assumption that an aggregate of data can be treated the same as an individual data point.

As I have demonstrated, data pools size and effectiveness at offering judgements are directly proportional. Marketers want more data in order to make more effective judgements about consumers. To assume that if collecting a single data does not violate privacy that an aggregate of data does not either is a fallacy of composition (Tavani 2007, 15). Their use cases are entirely different. Such a counter-argument misunderstands the power of machine learning and the power of pattern recognition by such technology.

In the second formation of this counter-argument, those who support law enforcement use of social media intelligence would point to the positive outcomes as a result of SOCMINT use. Companies like Clearview AI, who couple with law enforcement frequently, will tout the positive uses of their technology, pulling at the heartstrings of the people. Clearview cites use of their technology in Ukraine to identify dead soldiers, as well as in a case to catch a child predator (Clearview AI 2023). This strategy distracts from the danger of this technology, relying on an aforementioned rationalization described by Couldry and Mejias. It pulls the focus away from the danger of these technologies. They can be used for good, as Clearview makes clear (ha). But, without regulation, they are still dangerous. These technologies, no matter how accurate, result in a proxy logic where events that are predicted to happen are the basis of action. Actions may be taken that are never necessitated.

Two instances of this come from the 2020 Black Lives Matter protests. Mike Avery was arrested for posting “We promise to do our very best to be safe and not do anything to get arrested,” with the charge that he was encouraging rioting (Farivar, Solon 2020). Avery was charged under the Anti-Riot Act of 1968, a law that does not require evidence of imminent lawless action, and held without bail. Despite not actually inciting violence nor participating a violent action, Avery was charged; he was predicted to take unlawful action. The same goes for Chandler Wirostek, who was questioned by the FBI after he jokingly tweeted claiming to be the leader of antifa, a loose left-leaning movement with no structure or leadership (Farivar, Solon 2020). Actions were taken by law enforcement on the basis of predictions in these two cases, demonstrating the harmful possibilities of social media intelligence’s proxy logic. Such faults cannot be tolerated when they affect and influence the actions of law enforcement officers. Therefore, we must reject this counter argument.

Third, a common counter to my points in this paper is that users consent to their data being used by platforms to inform advertising by agreeing to Privacy Policies. The argument at its core is that because users consent, any objection is unwarranted as users have agency over their use of the platform. This argument is reflected by students in Daniel Trottier’s work, who say that each user is responsible for their own privacy as they have privacy settings and agree to terms of use (Trottier 2012, 77). It would then follow that no change is necessary; users are already agreeing to the terms! To those who might use this argument, let me ask you frankly: how often do you read the terms and conditions of platforms you use? According to Pew Research Center, only twenty two percent of Americans say they read privacy policies before agreeing to them (Auxier et. al. 2019). If you fall into that twenty two percent who say they read privacy policies, congratulations. Unfortunately that cohort may be smaller than it declares itself to be. In a study published by ProPrivacy, only one percent of participants actually read a privacy policy that asked for naming rights of the user’s first-born child. Further, ProPrivacy found that seventy percent of participants lied, claiming to have read the agreement when they did not (Sandle 2020). Evidently, the majority of users do not know what they are agreeing to when clicking “I Agree” under a privacy policy.